...

- first the download program needs to know which datasets you are interested in;

- then you have to launch the appropriate script in Powershell PowerShell to download them.

Step 1 : retrieve the list of your (selected) datasets

...

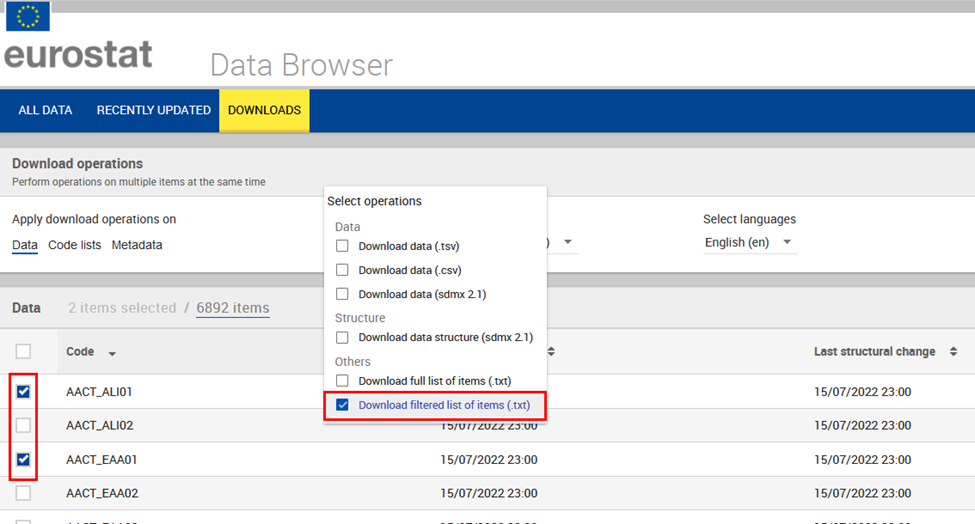

- Go to the Data Browser Downloads page

- Select the datasets you are interested in. In the example below: AACT_ALI01 and AACT_EAA01

- In ‘Download operations’‘Bulk actions’, select ‘Download filtered list of items (txt)’ selection list’ as shown in the screenshot below,

- You will get a zip file with a text file named FilteredSelection_Items_List_EN.txt containing tab-separated values.

- Do not forget to unzip your file and save it for example in your user folder ‘C:\Users\your user name\FilteredSelection_Items_List_EN.txt’.

- Example :

Download sample file that contains the listing as selected in above screenshot.

...

| Info | ||

|---|---|---|

| ||

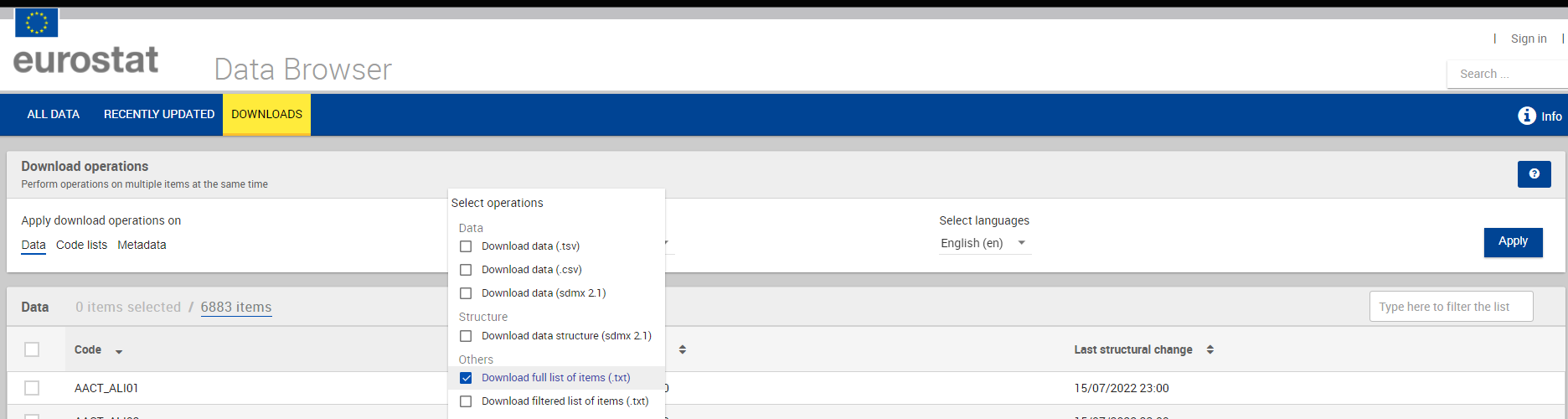

The complete list of datasets can be downloaded by using ‘Download full list of items (txt)’ inventory’ as used in next example. |

Step 2 : download the data files

...

By connecting a few Powershell cmdlets (Import-CSV, ForEach-Object and WebClient.DownloadFile), one can easily you should be able to download the wanted desired content from the specified URL (SDMX-CSV in this example) to the current a folder.

| Code Block | ||

|---|---|---|

| ||

Import-CSV .\FilteredSelection_Items_List_EN.txt -Delimiter "`t" | ForEach-Object { (New-Object System.Net.WebClient).DownloadFile($_."Data download url (csv)", "$pwd\$($_.Code).csv") } |

...

| Code Block | ||

|---|---|---|

| ||

Import-CSV .\FilteredSelection_Items_List_EN.txt -Delimiter "`t" | ForEach-Object { (New-Object System.Net.WebClient).DownloadFile("$($_."Data download url (csv)")&compressed=true", "$pwd\$($_.Code).csv.gz") } |

...

| Code Block | ||

|---|---|---|

| ||

# from https://securitytidbits.wordpress.com/2017/04/14/powershell-and-gzip-compression/comment-page-1/

Function Gunzip-File([ValidateScript({Test-Path $_})][string]$File){

$srcFile = Get-Item -Path $File

$newFileName = Join-Path -Path $srcFile.DirectoryName -ChildPath $srcFile.BaseName

try

{

$srcFileStream = New-Object System.IO.FileStream($srcFile.FullName,([IO.FileMode]::Open),([IO.FileAccess]::Read),([IO.FileShare]::Read))

$dstFileStream = New-Object System.IO.FileStream($newFileName,([IO.FileMode]::Create),([IO.FileAccess]::Write),([IO.FileShare]::None))

$gzip = New-Object System.IO.Compression.GZipStream($srcFileStream,[System.IO.Compression.CompressionMode]::Decompress)

$gzip.CopyTo($dstFileStream)

}

catch

{

Write-Host "$_.Exception.Message" -ForegroundColor Red

}

finally

{

$gzip.Dispose()

$srcFileStream.Dispose()

$dstFileStream.Dispose()

}

}

Import-CSV .\FilteredSelection_Items_List_EN.txt -Delimiter "`t" | ForEach-Object {

(New-Object System.Net.WebClient).DownloadFile("$($_."Data download url (csv)")&compressed=true", "$pwd\$($_.Code).csv.gz")

Gunzip-File "$pwd\$($_.Code).csv.gz"

Remove-Item "$pwd\$($_.Code).csv.gz"

} |

...

This example is similar to the first one but the dataset selection is now part of the script and not done in the Data Browser interface using the ‘Download filtered list of items (txt)’selection list’.

The script is simply based on the file ‘Download full list of items (txt)’ inventory’ that has to be downloaded first.

Pre-condition : having the full list of datasets

Along with your download in Data Browser, From the Downloads section you can request to receive the full list of items, via Download inventory

You will find in your archive download a file named Full_Items_List_ENinventory.txt that contains tab-separated values. This is the same format as the filtered one used in previous example.

...

Depending on your need you could further develop your script., for example start with automating teh download of inventory.txt from https://ec.europa.eu/eurostat/api/dissemination/files/inventory?type=data&lang=en

| Warning |

|---|

Please be cautious to verify |

| Warning |

Please be cautious to verify

Otherwise you could easily start downloading the complete scope of Eurostat data, that would require a large amount of disk space. |

Alternate execution : select and download the data files that are more recent than a specified date

In case you download data once a week / once a month, you could skip data without updates since your last execution time (to be set manually in the script)

| Code Block |

|---|

Import-CSV .\inventory.txt -Delimiter "`t" | ForEach-Object {

If($_.Code.StartsWith("TPS")) { # download only TPS datasets

$compareDate = [datetime]::ParseExact('31-05-2024', 'dd-MM-yyyy', $null)

$datasetDate = [datetime]::ParseExact($_."Last data change", 'yyyy-MM-d\THH:mm:ssK', $null)

If($datasetDate -ge $compareDate) { # download only dataset with last update date after

Write-Host Download $_.Code because last data change $datasetDate is more recent than $compareDate

(New-Object System.Net.WebClient).DownloadFile($_."Data download url (csv)", "$pwd\$($_.Code).csv")

}

}

} |

Extension: validate and re-download in case of API async response

Even if data files are prepared in advance, it may seldom happen that instead of data an "API async response" is present in the final file.

| Code Block |

|---|

<env:Envelope xmlns:env="http://schemas.xmlsoap.org/soap/envelope/">

<env:Header/>

<env:Body>

<ns0:syncResponse xmlns:ns0="http://estat.ec.europa.eu/disschain/soap/extraction">

<processingTime>412</processingTime>

<queued>

<id>98de05ea-540a-43d3-903b-7c9e14faf808</id>

<status>SUBMITTED</status>

</queued>

</ns0:syncResponse>

</env:Body>

</env:Envelope> |

Please see API - Detailed guidelines - Asynchronous API for more details.

Following script is proposed to validate your downloaded files

This script takes the assumption that you are working in an initially empty folder where all the CSV files present are from a download ran recently.

It is proposed to save this script in a powershell executable file (extension ps1) so you can run and see its output in the windows PowerShell terminal

| Code Block | ||||

|---|---|---|---|---|

| ||||

$folderPath = "." # work in current folder

$fileExtension= "*.csv" # scan files with csv extensions"

$pattern = "^<env:Envelope" #search for file with particular async response pattern in first line"

$inventoryFile = "inventory.txt"

# Get all files of specified extension in the specified directory

$files = Get-ChildItem -Path $folderPath -Filter $fileExtension

# Display count in terminal

Write-Host Searching in $files.Length files for API async responses

# Initialize an empty array to store matching filenames

$matchingDatasetCodes = @()

foreach ($file in $files) {

# Read the first line of the file

$firstLine = Get-Content $file.FullName -First 1

# Check if the first line matches the pattern

if ($firstLine -match $pattern) {

# collect dataset code

$matchingDatasetCodes += [System.IO.Path]::GetFileNameWithoutExtension($file)

}

}

# Uncomment next line to Write the matching dataset codes to a text file

# $matchingDatasetCodes | Out-File -FilePath "Output.txt"

# Report in terminal about matching dataset codes

Write-Host Found $matchingDatasetCodes.Length with API async responses

# Redo download for matching dataset codes

Import-CSV .\$inventoryFile -Delimiter "`t" | ForEach-Object {

If($matchingDatasetCodes -contains $_.Code) {

Write-Host Re-downloading $_.Code

(New-Object System.Net.WebClient).DownloadFile($_."Data download url (csv)", "$pwd\$($_.Code).csv")

}

}

|

Example 3: keeping up to date for a single dataset in Node.js

...

- you must have a working instance of Node.js runtime installed on your computer

- Save below script to a file

- Execute it as mentioned in https://nodejs.devorg/en/learn/command-line/run-nodejs-scripts-from-the-command-line/)

| Code Block | ||||

|---|---|---|---|---|

| ||||

#!/usr/bin/env node

// library to make HTTP calls

const fetch = require('node-fetch');

// library to write to the filesystem

const fs = require('fs');

//util method to write to the filesystem

const downloadFile = (async (url, path) => {

const res = await fetch(url);

const fileStream = fs.createWriteStream(path);

await new Promise((resolve, reject) => {

res.body.pipe(fileStream);

res.body.on("error", reject);

fileStream.on("finish", resolve);

});

});

//last time program was run (you should change this value or make it a parameter of your script)

const lastCheck = '2020-09-19T18:00';

// online data code to lookup (you should change this value or make it a parameter of your script)

const onlineDataCode = "T2020_10";

(async () => {

//retrieve information on dataset in JSON

const response = await fetch('https://ec.europa.eu/eurostat/api/dissemination/sdmx/2.1/dataflow/ESTAT/' + onlineDataCode + '?format=json');

const json = await response.json();

//extract last data udpate date

var lastUpdateDate = null;

for (var i = 0 ; i < json.extension.annotation.length; i++) {

//console.log(json.extension.annotation[i]);

if (json.extension.annotation[i].type == "UPDATE_DATA")

lastUpdateDate = json.extension.annotation[i].date;

}

//compare last data update date with our own

if (Date.parse(lastUpdateDate) > Date.parse(lastCheck)) {

console.log(onlineDataCode + " need update " + " last updated at " + lastUpdateDate);

//download needed format (remove the line you do not need)

console.log("Downloading data in TSV for " + onlineDataCode );

downloadFile('https://ec.europa.eu/eurostat/api/dissemination/sdmx/2.1/data/' + onlineDataCode + '?format=TSV', onlineDataCode +'.tsv');

console.log("Downloading data in SDMX-CSV for " + onlineDataCode );

downloadFile('https://ec.europa.eu/eurostat/api/dissemination/sdmx/2.1/data/' + onlineDataCode + '?format=SDMX-CSV', onlineDataCode +'.sdmx.csv');

console.log("Downloading data in SDMX-ML 2.1 for " + onlineDataCode );

downloadFile('https://ec.europa.eu/eurostat/api/dissemination/sdmx/2.1/data/' + onlineDataCode , onlineDataCode +'.sdmx.xml');

console.log("Downloading structure in SDMX-ML 2.1 for " + onlineDataCode );

downloadFile('https://ec.europa.eu/eurostat/api/dissemination/sdmx/2.1/dataflow/ESTAT/' + onlineDataCode +'?references=descendants&details=referencepartial', onlineDataCode +'.sdmx.structure.xml');

// or extract specific time-series in json-stat to feed visualisations..

downloadFile('https://ec.europa.eu/eurostat/api/dissemination/sdmx/2.1/data/' + onlineDataCode + '/A.....FR+LU+DE?format=JSON', onlineDataCode +'_FR_LU_DE.json');

// or use Eurostat statistics query format, because SDMX REST have may be less readable depending the query (may have too many dots, need to know the order of dims)

downloadFile('https://ec.europa.eu/eurostat/api/dissemination/statistics/1.0/data/' + onlineDataCode + '?geo=FR&geo=LU&geo=DE', onlineDataCode +'_FR_LU_DE.stats.json');

} else {

console.log(onlineDataCode + " already up to date " + " last updated at " + lastUpdateDate);

}

})(); |

...